The “science of reading” (SOR) movement consisting of the media, parents, and politicians has painted itself into a corner. And like cornered animals, they often react with anger:

The SOR self-inflicted corner is demanding a narrow use of “science” for everyone else but not following that demand themselves:

It is clear that the repeated critiques of literacy teacher preparation expressed by the SOR community do not employ the same standards for scientific research that they claimed as the basis for their critiques. However, to dismiss these critiques as unimportant would ignore the reality of consequences, both current and foreseen, for literacy teacher preparation. Consider the initiatives under- way despite the fact that there is almost no scientific evidence offered in support of these claims or actions.

Hoffman, J.V., Hikida, M., & Sailors, M. (2020). Contesting science that silences: Amplifying equity, agency, and design research in literacy teacher preparation. Reading Research Quarterly, 55(S1), S255–S266. Retrieved July 26, 2022, from https://doi.org/10.1002/rrq.353

Increasingly scholars have shown the SOR movement is a misinformation movement that depends on bullying, not “science.” And for a few years now, I have experienced and witnessed SOR advocates responding to evidence-based Tweets with anger, mischaracterizations, and personal attacks.

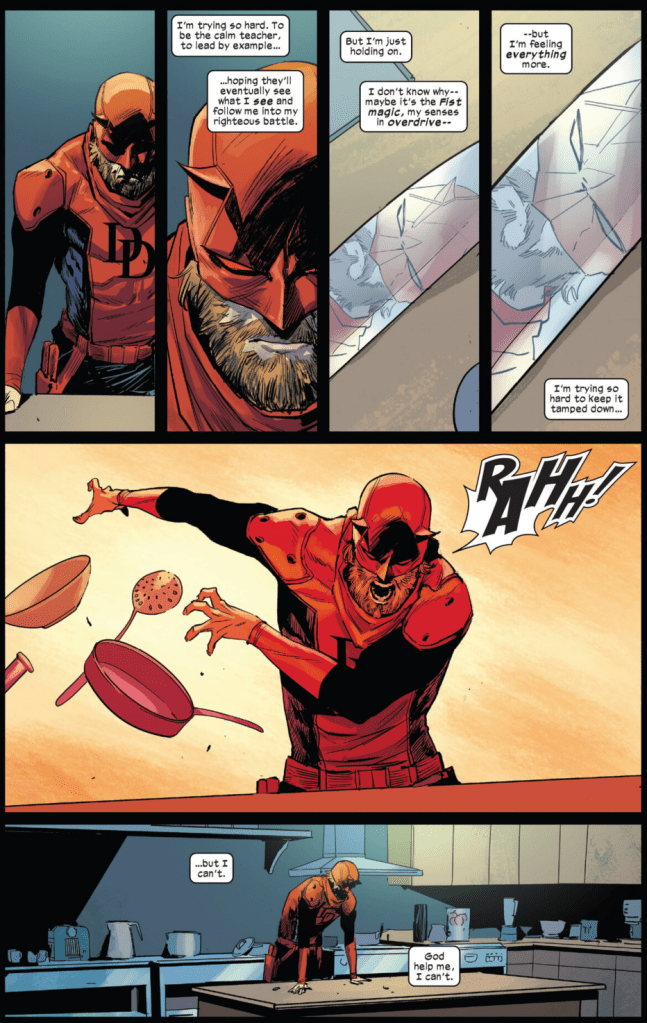

And thus, this passage from Bertrand Russell and panels from Daredevil 6 (v.7) resonate with me:

The SOR social media anger is grounded, I think, in the impossible corner SOR advocates have created. When I have posted scientific research about dyslexia (notably Orton-Gillingham) or LETRS, I have been visciuously attacked simply for noting that O-G and LETRS do not have scientific support but are embraced by the SOR movement.

I have never said O-G or LETRS is ineffective; I have never rejected or endorsed either. I simply have noted that if we are saying any program or approach must be scientific, neither of these meet that standard.

What is even more concerning is that the entire SOR movement itself fails implementation science; for example, Leveraging Evidence-based Practices: From Policy to Action, Ronnie Detrich, Randy Keyworth, and Jack States.

Detrich, Keyworth, and States provide an excellent example of why the SOR movement is doomed by its own standards, how even high-quality “science” fails its own standards, and why this reading war is yet another cycle of the same misguided claims and idealistic solutions.

“Policy without evidence is just a guess and the probability of benefit is likely to be low,” Detrich, Keyworth, and States explain, adding, “Evidence without policy is information that is unlikely to have impact as it has limited reach.”

When I stated LETRS fails scientific scrutiny, several SOR advocates responded by noting the lack of fidelity in implementing LETRS. And thus, what we know from implementation science:

The development of evidence-informed policy is not sufficient to assure the benefits of the policy will be realized. Policies must actually be implemented well if they are to have impact. Many education policies have been enacted without any meaningful impact on educational outcomes. Often this was because there was no comprehensive, coherent plan for implementing the policy. Implementation science is defined as the study of factors that influence the full and effective use of innovations (National Implementation Research Network, 2015) and brings coherence to the implementation of policies. It is the third leverage point that can be utilized to turn policy into meaningful action, thus achieving desired outcomes. It is the bridge between policy, evidence-based practices, and improved outcomes for students. Without implementation science, the aspirations of evidence-informed policy, no matter how well intentioned, are not likely to result in benefit for students.

Leveraging Evidence-based Practices: From Policy to Action, Ronnie Detrich, Randy Keyworth, and Jack States

That last sentence is extremely important because it speaks to the very high stakes involved in reform as well as the nearly impossible task of implementing reform in ways that can be identified as successful.

Some of the inevitable traps of reform are identified in implementation science:

Policy is made broadly but implemented locally. Policy is generally made at a distance removed from the local context in which it is to be implemented and all of the differences across implementation settings cannot be anticipated. … It has been argued that because of the complexities of differing contexts, the concept of evidence-informed policy is not realistic (Greenhalgh & Russell, 2009). The concern is that the research context is so different from the local context as to make research evidence irrelevant.

Evidence-informed policy can prescribe what to do, but not how to do it in a specific context. Those with the best understanding of that context are in a better position to make those decisions. At the local level decisions about how to best implement an evidence-informed policy requires professional judgment and a clear understanding of the values of the local community. Conceptualizing evidence-informed policy as a decision-making framework addresses many of the concerns about the feasibility of it being realistic to address issues of context (Greenhalgh & Russell, 2009).

…[T]here are complex issues to be solved if evidence is to influence policy. There is an implication that policymaking is a rationale process in the sense that if the evidence is available policymakers will act on it; however, the formulation of policy is influenced by a number of factors other than evidence. A challenge for those advocating evidence-informed policy is that policymakers bring their own political and personal biases to the task. In instances when evidence conflicts with political and personal preferences, preferences usually prevail and evidence is discounted [emphasis added](Gam- brill, 2012).

Leveraging Evidence-based Practices: From Policy to Action, Ronnie Detrich, Randy Keyworth, and Jack States

What the SOR movement demonstrates is the essential flaw of advocacy grounded in missionary zeal; and thus, “If evidence is to play a central role in influencing policy, then the challenges of overcoming personal biases, political considerations, advocacy groups, and financial incentives must be confronted.”

In short, when SOR advocates challenge existing market forces driving reading program adoption, they seem incapable of seeing how that same market dynamic is shaping their own movement.

As well, the SOR movement is trapped in an idealistic and simplistic use of “science” (along with a misunderstanding of meta-analyses):

A limitation of experimental evidence is that one experimental study is never sufficient to definitively answer a question about what should be done and is a poor basis for formulating policy. If there is a body of literature, the common approach by education scholars has been to review the extant literature and make a reasoned judgment about what should be done. Policymakers are not necessarily prepared to conduct a review of the literature and come to reasonable conclusions about what should be done as a matter of policy. An alternative to the narrative type of review is a systematic review or meta-analysis that summarizes a body of research and can inform policymakers about the general effect of a practice. A significant advantage of meta-analysis for policymakers is that it provides a single score (effect size) that best estimates the strength of an intervention across populations, settings, and other contextual variables. Program evaluation is another type of evidence that is valuable to policymakers. It provides feedback about the effectiveness of a program or practice and can provide insights about how policies can be changed to increase benefit.

Leveraging Evidence-based Practices: From Policy to Action, Ronnie Detrich, Randy Keyworth, and Jack States

Often, little weight is allowed for teacher-based evidence because that doesn’t meet the narrow definition of “science” that has painted SOR advocates into a corner:

Similarly, practitioners seeking answers to challenges they are facing can collect data about the frequency of occurrence, the contexts in which they are most likely to occur, and the differences between the contexts in which the problem occurs and does not occur. Practice-based evidence is the essence of data-based decision making (Ervin, Schaughency, Mathews, Goodman, & McGlinchey, 2007; Stecker, Lembke, & Foegen, 2008). Single participant designs are commonly used in data-based decision making. The unit of analysis can be an individual to determine if she is benefiting from an intervention and is common in response to intervention approaches (Barnett, Daly, Jones, & Lentz, 2004). The unit of analysis can also be larger such as a whole school. Practitioners of school-wide positive behavior support rely on single participant designs to make decisions regarding the effectiveness of whole school interventions (Ervin et al., 2007).

Leveraging Evidence-based Practices: From Policy to Action, Ronnie Detrich, Randy Keyworth, and Jack States

But the limitations of “scientific” or “evidence-based” policy and practice have occurred in recent history, specifically No Child Left Behind (NCLB):

There were a number of implicit assumptions in the use of this approach with NCLB (Detrich, 2008). First, it was assumed that there was an established body of evidence-based interventions. Secondly, it was assumed that educators were aware of the evidence supporting different practices. A third assumption was that educators had the expertise to implement a specific practice. A final assumption was that the necessary resources were available to support effective implementation. The experience with NCLB would suggest that these assumptions are not justified. When NCLB was enacted, there was no organized resource for educators that provided information about the evidentiary status of various interventions. More recently, there are a number of organizations that summarize and evaluate the evidence supporting educational interventions such as the What Works Clearinghouse and Best Evidence Encyclopedia.

Leveraging Evidence-based Practices: From Policy to Action, Ronnie Detrich, Randy Keyworth, and Jack States

The irony of the failures of evidence-base policy in education is that we have implementation science that can and should guide how policy is crafted and implemented: “The stages of implementation science are exploration and adoption, installation, initial implementation, and full implementation (Blasé, Van Dyke, Fixsen, & Bailey, 2012).”

The SOR movement fails the very first stage: “Exploration and adoption is the phase in which all stakeholders (teachers, administrators, etc.) are involved in the decision-making in terms of defining the problem they are trying to solve and identifying possible solutions.”

This is, in fact, what I call for in my policy brief on the current policy failures in the SOR movement:

Develop teacher-informed reading programs based on the population of students served and the expertise of faculty serving those students, avoiding lockstep implementation of commercial reading programs and ensuring that instructional materials support—rather than dictate—teacher practice.

Thomas, P.L. (2022). The Science of Reading movement: The never-ending debate and the need for a different approach to reading instruction. Boulder, CO: National Education Policy Center. http://nepc.colorado.edu/publication/science-of-reading

The SOR movement is a media-based movement that has resulted in very bad and often harmful policy (see HERE, HERE, HERE, and HERE).

And thus the paradox of implementation science: “The fundamental goal of implementation science is to make sure that at each phase of implementation the necessary steps are taken to assure that an intervention is implemented with integrity.”

Most if not all reforms must be implemented with such a high degree of fidelity (likely one not possible in the real world) that all reform is doomed necessarily to be identified as a failure.

The SOR movement will be declared a failure exactly like all the similar reading reform movement before it:

It is abundantly clear that policy alone is not sufficient to improve students’ academic achievement. Since the publication of A Nation at Risk (Gardner, 1983) there has been a steady stream of policy initiatives with the intent to reform the U.S. education system. In the time period covered by these various policy initiatives there is almost 50 years of data suggesting that academic performance in reading and math as measured by NAEP has not changed in any significant way despite all of the policies and money spent (Nations Report Card, 2015).

Leveraging Evidence-based Practices: From Policy to Action, Ronnie Detrich, Randy Keyworth, and Jack States

Regardless of the identified problems and regardless of the policy solutions, education is a steady march of failed reforms—most of which are indistinguishable from the others.

One example offered by Detrich, Keyworth, and States demonstrates the fatal gap between evidence and policy:

An additional shortcoming in the development of the policy to reduce class size was that all available evidence from Tennessee suggested that class size should be 17 or less and the teacher should be credentialed and have experience. California reducing class size to 20 was without support in the available evidence so even with fully credentialed teachers, the effects may have been minimized.

Leveraging Evidence-based Practices: From Policy to Action, Ronnie Detrich, Randy Keyworth, and Jack States

In reality, even when policy is identified as “scientific” or “evidence-based,” the actual practice is distorted by ideology or practical issues of implementation (evidence-based policy tends to be too politically or financially expensive to implement with fidelity).

And there is an unintended message in Detrich, Keyworth, and States—how researchers themselves fall into ideological traps.

Similar to the flaws in media coverage of SOR (see HERE and HERE), Detrich, Keyworth, and States misrepresent the whole language movement in California (see HERE and HERE) and uncritically cite NCTQ reports that do not meet a minimum bar of scientific research (see HERE, HERE, and HERE).

SOR advocates, then, find themselves in a very real dilemma. They often resort to anger and bullying because, I think, unconsciously they recognize the corner they have painted themselves into, the hypocrisy they are trafficking in.

Education and reading reform are cycles of doomed failure because we are too often lacking historical context, we are prone to ideological and market bias, and we commit to standards that no one can achieve.

The anger and bullying of SOR advocates isn’t justifiable, but it is predictable.

Again, as Detrich, Keyworth, and States explain: “Without implementation science, the aspirations of evidence-informed policy, no matter how well intentioned, are not likely to result in benefit for students.” LETRS training, for example, increases teacher confidence but doesn’t raise student achievement.

In a few years, just as we are experiencing a few years after NCLB’s “scientifically-based” mandate, there will be hand wringing about reading, charges of failure, and calls for new (read: the same) solutions that we have cycled through before.

It seems the one science we are determined to ignore is implementation science because it paints a complex picture that isn’t very politically appealing.