[Header Photo by Chris LaBarge on Unsplash]

I recently saw a post on social media asking teacher if students should be allowed to redo assignments. This question has always bothered me since I have spent 42 years grounding my teaching in requiring and allowing students to revise their work.

My courses are structured as workshop environments and the assignments (including the major assignments that are always essays) are designed as teaching/learning experiences and not as assessments.

I have also spent the great majority of my career not grading assignments and not giving tests.

These commitments seek to increase student engagement with learning and to reduce the stress often associated with students completing assignments.

Those goals, however, have remained elusive.

In the last few years, I have begun experimenting with grade contracts to help students better navigate the atypical aspects of my courses and grading approach. Here are some sample contracts:

Of course, I still must assign students grades in the courses, but I continue not to grade the assignments.

Especially in the context of the current renewed cycle of concern about grade inflation, notably in higher education and at selective universities, I have always been confronted with having too many As, or the assumption that students being allowed to revise work increases (inflates?) the likelihood of As.

Critics of revision also argue students will not try when submitting assignments the first time (and thus, I have a strict minimum requirement policy that allows me not to accept inferior or incomplete work).

To be blunt, students earn grades; teachers do not give grades.

Further, if and when my students earn As, I see that as success; when students fail or earn lowers grades, I typically feel as if I have also underachieved.

This past fall, I taught 3 courses with 53 students receiving grades; as you suspect, almost all of them contracted for an A. However, as the semester drew to a close, many students were on the precipice of failing (not meeting the minimum requirements of the course), and as a result, I offered two extensions during the week of exams (the contract specifies that students must meet the grade contract/course minimum requirements by the last day of the course in order to be allowed to submit their final portfolio/exam).

Here is the grade distribution from fall (please note that I teach in a selective university and these students were high achieving in high school):

- A = 30

- B = 8

- C = 13

- F = 2

Notable is that the first-year students were outliers in terms of being able to achieve As:

- A = 4

- B = 1

- C = 7

The grades for fall were particularly frustrating for me since I think the most recent iterations of my contracts and assignments are far superior to earlier versions, and since I am in years 42 as a teacher, I do think I am a better teacher.

Here are a few thoughts about grades and contracts as well as how current students are struggling as a result of having been in school during the Covid era.

First, I must stress that not grading assignments and using grade contracts asks more of students, not less. The key is that I have minimum expectations for submitted work and then minimum expectations for the additional work required to meet the A-range.

For example, I had a student submit their major essay in one course without any citations in the essay. I responded that the work could not be accepted and provided support material for resubmitting the work.

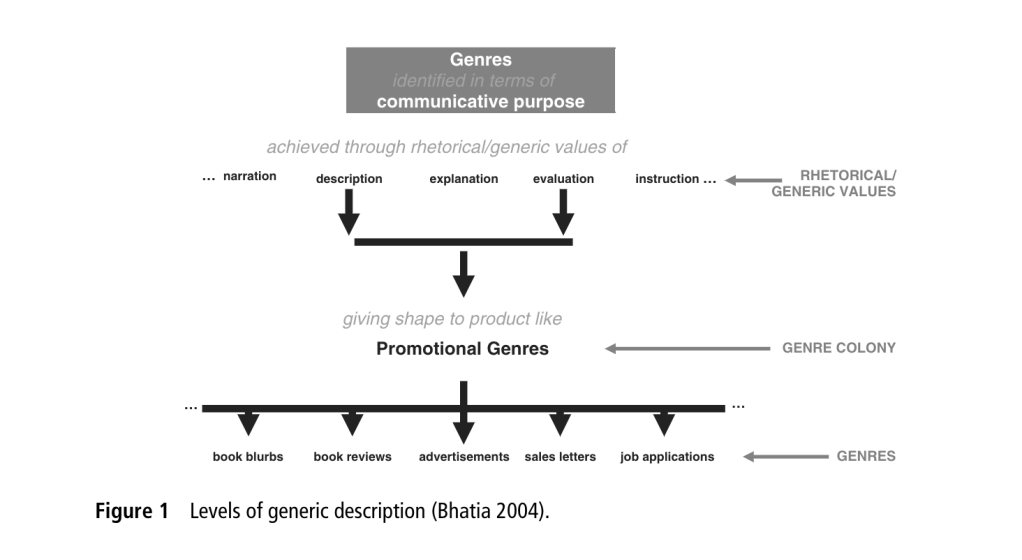

That submission, in effect, did not count. Students tend to recognize that making their best effort upfront benefits them. [Note the minimum requirements in this discourse analysis assignment used in two courses.]

In a traditional graded course, the student could have just received an F (and never really engage in the learning experience), had success on the other assignments and tests, and then maybe received a B or C in the course.

What is lost in that traditional scenario is learning.

Requiring and allowing revised work is an individualized teaching/learning process.

Although students often become frustrated by the expectations and my strictness, here is one student’s response to a course this fall (a student who had several submissions not accepted):

I just wanted to reach out and thank you for all of your help and keen attention with my paper. I think I learned more from that assignment than I did in my FYW. But, I know it took a lot of time and effort on the back end and I just wanted to reach out and thank you for all of your edits and feedback! I don’t think I have a paper from my college experience that is so technically detailed and I cannot even begin to express how much I learned when it came to APA. I see it everywhere now and am very grateful for the APA skills this assignment pushed me to gain.

A few semesters ago, a former student from my upper-level writing/research course (see the assignment here) contacted me and thanked me, expressing their frustration during the course but noting that they realized the value then because they were in a doctoral program where the experience was bearing fruit (my course required annotated bibliographies and APA citation).

Using assignments as teaching/learning experiences and not assessments in the context of grade contracts allows me to be more rigorous while also raising expectations for student engagement.

However, Covid-era students have been taught in traditional courses and by traditional grading that any work submitted must receive some credit, and because of the many disruptions to schooling, they have also been taught that their perception of trying hard also deserves credit (in the form of grades).

I believe these dynamics are particularly true for high-achieving students.

Students have directly told me that traditional tests and grades are easier for them to navigate and easier for them to achieve the grades they want (even when they admit that the learning is increased in the expectations I implement).

Frankly, the struggles I witness represent one of the most ignored flaws with education in the US—the tension between grades and learning.

Grading doesn’t reflects well learning and grading often inhibits learning.

My commitment to ungrading, requiring/allowing students to revise, and grade contracts is a commitment to learning (and teaching).

It remains discouraging that this commitment, however, often creates stress and even failure for some students who are the product of grade-centered traditional schooling.