The newest NAEP crisis (until the next one) concerns history and civics NAEP scores post-pandemic.

Similar to the NAEP crisis around reading—grounded in a misunderstanding of “proficiency” and what NAEP shows longitudinally (see Mississippi, for example)—this newest round of crisis rhetoric around NAEP exposes a central problem with media, public, and political responses to test data as well as embedding proficiency mandates in accountability legislation.

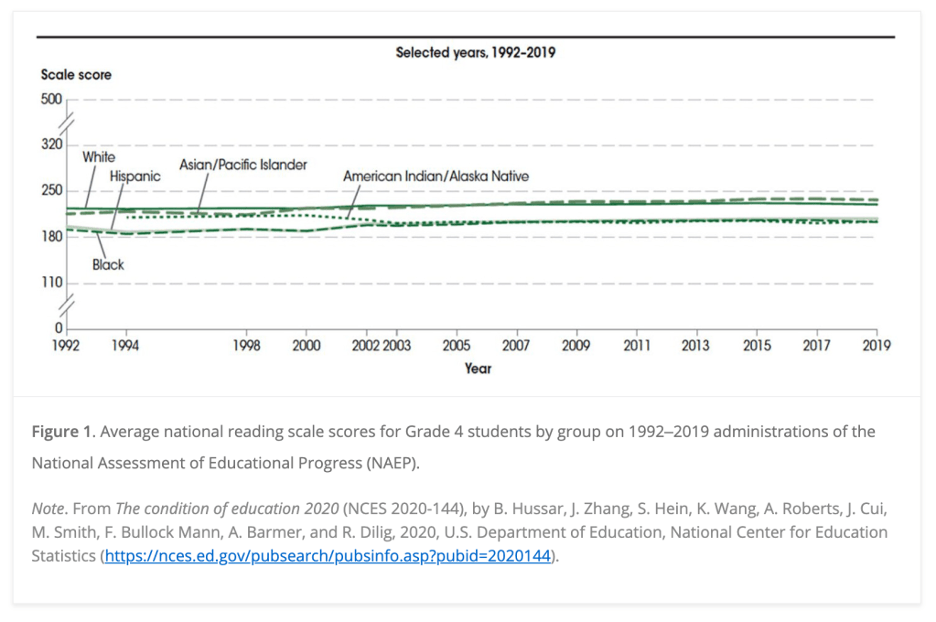

As many have noted, announcing a reading crisis is contradicted by longitudinal NAEP data:

But possibly a more problematic issue with NAEP is confusing NAEP achievement levels with commonly used terms such as “grade level proficiency” (notably as related to reading).

Yet, as is explained clearly on the NAEP web site: “It should be noted that the NAEP Proficient achievement level does not represent grade level proficiency as determined by other assessment standards (e.g., state or district assessments).”

Public, media, and political claims that 2/3 of students are below grade level proficiency, then, is a false claim based on misreading NAEP data and misunderstanding the term “proficiency,” which is determined by each assessment or state (not a fixed metric).

Here is a reader for those genuinely interested in understanding NAEP data, what we mean by “proficiency,” and why expecting all students to be above any level of achievement is counter to understanding human nature (recall the failed effort in NCLB to mandate 100% of student achievement proficiency by 2014):

- Beware Grade-Level Reading and the Cult of Proficiency

- NAEP achievement levels

- What’s A Big Change in State NAEP Scores?, Tom Loveless

- The NAEP proficiency myth, Tom Loveless

- Common Core Testing: Who’s The Real “Liar”?, Jersey Jazzman

- Gaslighting Americans about public schools: The truth about ‘A Nation at Risk’, James Harvey

- No Crisis, No Miracles: The False Narratives of Education Journalism

- Schools Matter: Those Who Cannot Remember the Past