She was born in November 1963/The day Aldous Huxley died/And her mama believed/That everyone could be free

“Run, Baby, Run,” Sheryl Crow

The summer of 1975, I was diagnosed with scoliosis and fitted with a form-fitting plastic body brace anchored with aluminum rods and spanning from my pelvic bone to my chin. This was a hell of a way to start my ninth grade at Woodruff Junior High.

I would wear that brace 23 hours a day, gradually weaning myself off the support as my vertebrae both (mostly) repaired their disfigurement and eventually stopped growing; this meant I wore the brace for much of my high school experience as well.

My childhood and teen years were a contradiction of Southern racism, ignorance, and bigotry warmly wrapped in the blanket of my loving and doting working-class parents. My scoliosis was a significant financial burden on my parents (who never flinched at the medical care it required), but it also in some ways broke their hearts.

I was a skinny and very anxious human, deeply self-conscious and introverted before the years of the brace came upon me in the roiling shit-storm of adolescence.

It was at this juncture of my life that I discovered comic books, what now seems like a logical extension of the fascination I inherited from my mom for science fiction (she loved classic black-and-white B-movies, always claiming The Day the Earth Stood Still as her favorite film).

Once again, my parents never wavered when I began collecting and drawing from Marvel comics in the mid-1970s. They drove me to the local pharmacies to buy new comics and even bought a pretty large and important collection from a guy selling hundreds of comics in the local newspaper.

By high school graduation, I had amassed essentially every comic book Marvel published in the 1970s.

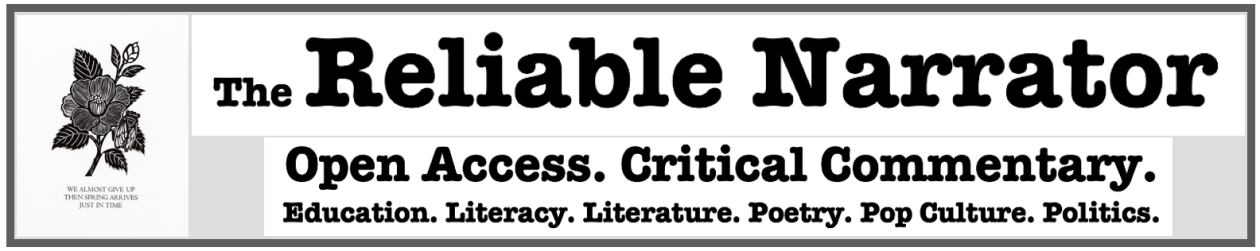

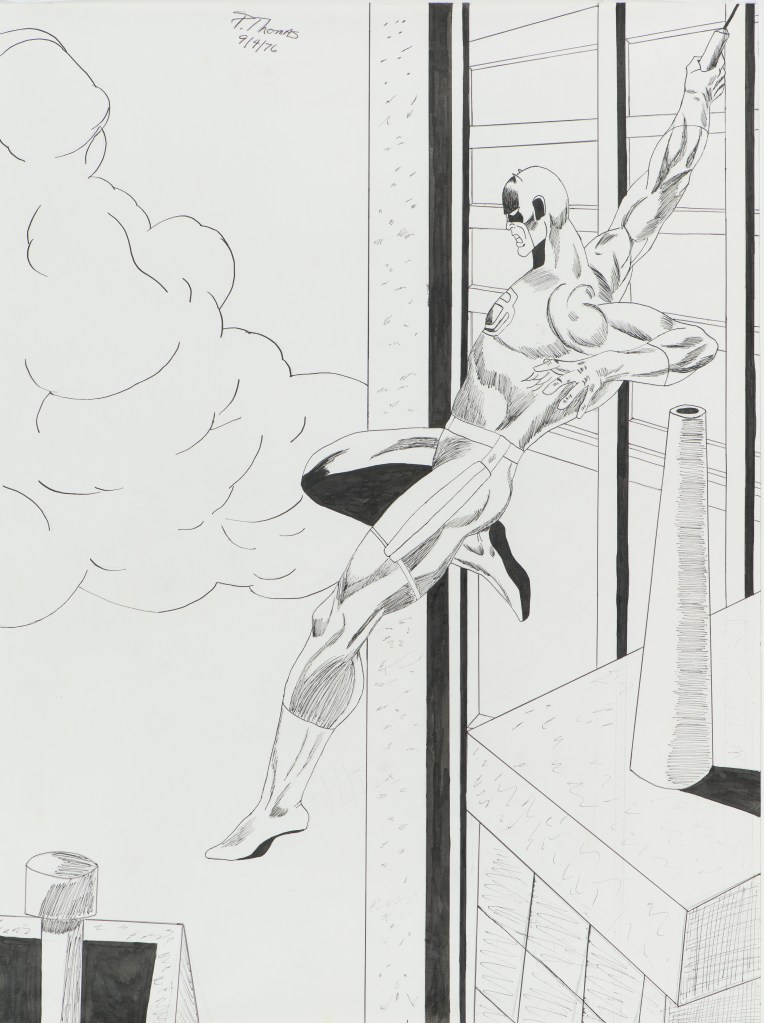

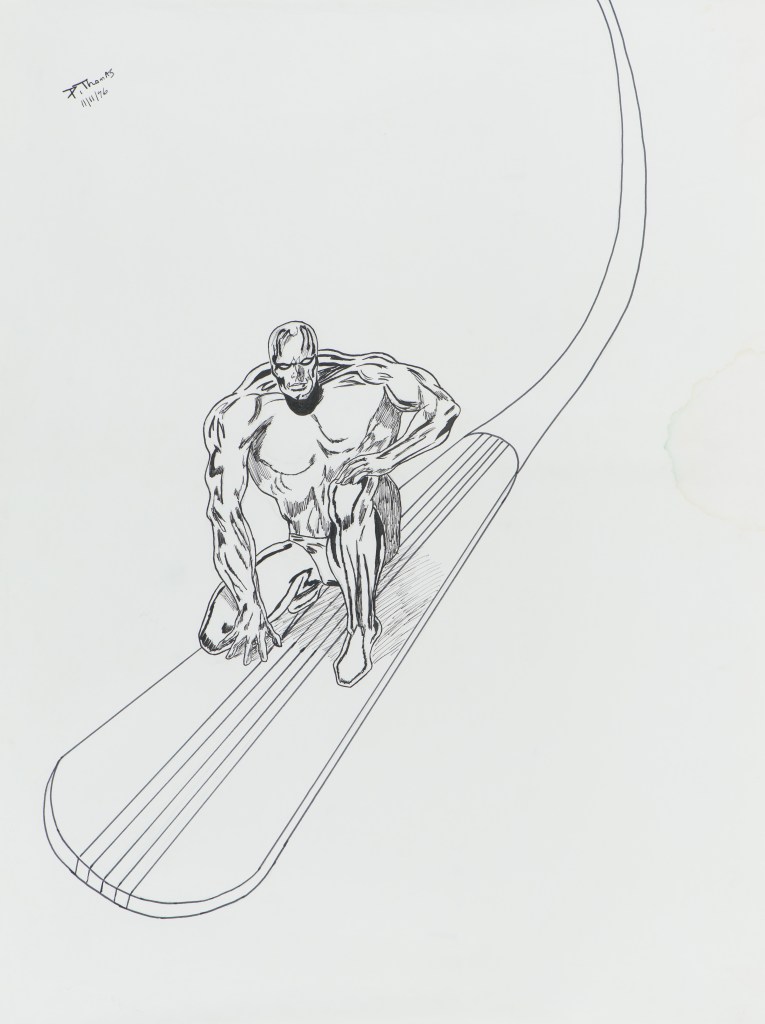

It would take me many years to recognize that my comic book collecting and science fiction reading were the foundation upon which I eventually chose to be a high school English teacher and came to recognize that I am a writer (although I initially clung to being a comic book artist since I spent hours and hours standing at our kitchen bar drawing from the comics I collected). (See my original artwork from the mid-/late 1970s below.)

Just thirteen days away from turning 60, I am baffled at not being able to specifically identify when I stopped collecting comics some time around graduating high school and attending college. I assume it seemed childish at some point even though I kept my 7000-book collection well into marriage.

I do know that when we bought our first townhouse, I sold that collection for way less than it was worth in both dollars and for my soul. I held onto the full run of Howard the Duck, but let everything else fund my misguided pursuit of the corrupted American Dream—home ownership.

At some point in the late 1980s and early 1990s, I briefly returned to collecting, prompted by several of my high school students and the Frank Miller rebooting of Batman as well as the Tim Burton/Michael Keaton films. This coincided with the 1990s boom/bust of mainstream comics by Marvel and DC, and once again, adult life kept me from really fully engaging in something I love.

When I moved to higher education in 2002 after 18 years teaching high school English, I found a way to merge my adolescent love for comic books and my adult life—comic book scholarship and blogging. I also published one book on comic books, which allowed me a justification for buying comics and graphic novels once again (and a way to move beyond super hero comics). I learned a great deal (and made several embarrassing mistakes) when I merged my fandom with my scholarship, but that work about a decade ago, once again, didn’t really stick—although it certainly didn’t fade away either.

Recently, I allowed myself to re-commit to collecting, focusing on Daredevil and then adding the newest Wolverine run. I am back engaged with a local comic book store just minutes from where I live, and I also collected the recent X of Swords run from Marvel. (See part of my Xmas haul below.)

And yesterday, something very interesting happened for me, again just two weeks from turning 60.

Concurrent with my reconnecting with comic book collecting, I have been embroiled in the newest reading war around the “science of reading” and also making a very feeble attempt at learning to play video games (initially Minecraft).

I never became a gamer because I always have struggled with the controls, and in my advancing age, that has been a real hurdle even more pronounced. But I also experienced a significant amount of disorientation as well as feeling extremely (for lack of a better word) dumb.

Starting a game left me paralyzed, repeatedly asking what I was supposed to do. I often was coached with this advice: Just explore and watch for what the game shows you to do.

That meant nothing to me, even less than nothing. In fact, I soon realized that I was simply unable to read the video games while experienced gamers have internalized hundreds of signals and cues to the point that “what you are supposed to do” seems obvious (see this on gaming, for example).

One of my foundational complaints about the “science of reading” movement has been its embracing a simple view of reading, and here I was, at 60, experiencing how incredibly complex reading is—that reading is far more than decoding print (and is even often apart from print).

Gaming like reading comic books is a holistic experience with text as well as images all guided by prior knowledge and experiences, and the blending of many different kinds of codes that are both unique to a single environment as well as common across the medium/genre/form.

The subgenres of gaming have commonalities like the subgenre of comic books, super hero comics.

Although I have recognized myself as a writer for forty years now—and never lift a pencil to draw any more—I was pulled back into comic book collecting because of the artwork, first Daredevil (a series that has always had distinct and powerful artists working on the character, in my opinion), then the rebooted Wolverine series, and now the incredible artists working on X-Men.

In several of my college courses, I have integrated comic books and graphic novels, often to students who have never read comics. They almost always admit that reading comics is much harder and takes much longer than they expected. It wasn’t, they discovered, like reading a text-only essay or book.

As I have been diving back into the X of Swords series and the rebooted X-Men series spearheaded by Jonathan Hickman, I have noticed my haphazard reading style of comics, very art-based and not very sequential (I glance around the entire spread and often dart back and forth among the text and panels).

And so here is the very interesting thing from yesterday.

In issue 4 of X-Men (vol. 5), Magneto quotes Aldous Huxley:

A sucker for literary references, I paused to search the quote, and then returned to reread the pages leading up to and after the use of the quote. Then, I realized something unusual that I had not noticed when first reading:

The omission of “care.”

Every time I read this, I still insert “care” automatically and have to force myself to see that it isn’t there (as if Professor X is doing it for me each time).

There are dozens of cues in those three panels, some of them text (and one of them the absence of assumed text).

As I count down the days until I turn 60, I am living some of the fantastical elements we associate with children’s stories, comic books, and science fiction—a pandemic, a Capitol siege, and the many eras of my own life overlapping with each other as if I am both living my current life and going back in time.

Life is no comic book or video game, but I am tasked with making sure as I explore the things around me that I pay attention to all the cues of what I am supposed to do—and it remains a very complicated task in 2021 as it was in 1975.